The Public Lab Blog

stories from the Public Lab community

About the blog | Research | Methods

Introducing the MapKnitter: Community Atlas project

Some of you (especially in our coding community) have noticed the major increase in work on our MapKnitter map-making website over the past few months, and we wanted to take the opportunity today to announce that, with support from Google, we have launched a new pilot project to make it easier than ever for communities to build their own "community atlas." Using MapKnitter and the PublicLab.org platform, we'll be helping a new generation of community mappers to use kites and balloons to create their own "Community Atlases" with our open source tools, and to tell stories about local issues from environmental problems to community projects.

Fellowships

As part of this project, we've launched a fellowship, and begun working with two mapping fellows with community-based balloon or kite mapping projects. These fellows, who you'll hear more from soon, will be doing some amazing community mapping work while also working with the Public Lab coding community throughout the map-making process, helping to identify how MapKnitter.org can better support the needs of mappers around the world.

MapKnitter overhaul

This pilot project involves a thorough reboot of the now-ten-year-old MapKnitter codebase, including major overhauls of the user interface, design, and back-end systems. We're also rebuilding our map exporting system (which long-time users will know is quite slow) on Google Cloud infrastructure, and developing a range of new open source utilities and libraries to make this possible. More on this soon!

We've assembled an amazing team of code fellows from our community to lead this work on what, given the pilot scope of the project, has been a very fast timeline. Sasha, Cess, Stefanni, Sidharth, Gaurav, Sagarpreet, and Varun, and many others have helped make this possible, including Pranshu, Kaustabh, Alax, Avkaran, and more. You can see some of the amazing activity on this project here: https://github.com/publiclab/mapknitter/pulse/monthly

We've also recently published an early update to the site, incorporating a range of fixes, updates, and improvements; check it out: https://mapknitter.org --- but this is only the beginning, so keep your ears open!

Summer of Code

Google also hosts the Google Summer of Code program, which we have participated in for six years, and who have supported 13 fellows this coming summer (as was just announced!). Over recent years, we've steadily refined a workflow that welcomes new code contributors as they get plugged into our community, and aims to support building skills incrementally and cooperatively---and Google has been a key supporter of these efforts. Learn more about our diversity and inclusivity efforts in coding by checking out our Software Contributor Survey results, posted recently.

Stay tuned for more on this project, from stories from our fellows to demos of our new MapKnitter systems!

Follow related tags:

balloon-mapping kite-mapping grassrootsmapping mapknitter

My first blog post for outreachy

Who am I ?

Hi, This is Lekhika Dugtal. I'm Pre-Final Year Student pursuing Bachelors in Technology at Information Technology and Masters of Business Administration In Indian Institute of Information technology, Allahabad. I belong to place called Dharma valley, but Now living in New Delhi, India.

My Journey

I was never been a opensource enthusiast. But I started first contributing in legit way in January 2019 in one of the opensource competition called Opencode. And Now I'm in love with it.

My First step for a contribution in a organisation was in coala where my contribution seemed minuscule thing called one line Adding Ascinema url.

It was documentation based newcomer Issue.

I did the silliest mistakes. But For me, it was a huge step towards the world of opensource. For a newcomer like me, This PR was left at it's own stake by me and though later I got to know, it somehow got merged which was surprising.

My journey was bumpy with earliest of my difficulties in rebase and understanding the new frameworks. But As I moved forwards. Believe me, It was beyond amazing.

I have always been curious about FOSS and programs like GSOC and Outreachy.

Here, I would be summing up some of my initial experiences and How I came across this program outreachy and participated in it.

So, What is Outreachy

Outreachy (previously the Free and Open Source Software Outreach Program for Women) is a program that organises three-month paid internships with free and open-source software projects for people who are typically underrepresented in those projects.

How I came across Outreachy and My application process

I was going through some internship programs to apply for in this summer. I came across Outreachy. I thought of giving it try. The application for eligibility was huge and it took bit of ideas and time to write. And After the two-day review process, I was labelled eligible to participated in outreachy. I applied late , So I started off one and more week late than others.

Perks Of Outreachy

- You learn a lot? You can code, communicate as a part of community.

- You can travel ? Outreachy provides you travel stipend of $500. You can attend Conferences , Hackathons, Meetups you like without any constraints. Woaaahhhhh !!!

- Blogging ? You write blogs about the work done during internship and this helps one a lot in documenting projects, or provide guidance to other web users.

Choosing an Organisation

This was the most difficult step according to me. The program had huge list of organisation and projects. In the list, some percent of projects were announced. As I went through them, I realised that I guess I'm not made for it. I had no connection or similarity so ever with any of the project. The tech-stacks or ideas were so different from my Skills. I did came across one project of Mozilla for web-extension. I thought of contributing and went through bugzilla but there Mentors had suggested that it had many competitors and contributors for this project So they would like new ones to go for other projects. I was like boom, the only project I was somewhat connected to is full.

So maybe I should drop the idea of Outreachy ? But It soon came to end as In a week, Another percentage of projects were announced.

At that Time, I came across the organisation called Public Lab and it's project based on UI Improvements of Public Lab's main platform publiclab.org based on Ruby on Rails. The Public lab was planning revamp and redesigning the UI of platform as a whole. I felt that the project idea is so made for me. I immediately introduced myself in their chat channel to get myself for aware of projects ideas, work and community.

Public Lab Organisation as a community

Public Lab is a community for DIY environmental investigation. They especially welcome contributions from people from groups underrepresented in free and open source software!

Believe me, The organisation is so welcoming that you'll feel overwhelmed.

You not just have humans but even the welcoming bot to welcome you.

See !!! Just follow and love opensource like us and you are welcome to our community. Tadaaa !!!

They highly encourage you towards your first contribution in Public Lab. They have specific first-timers issue to contribute where they provide complete steps of opensource contribution. The mentors are helpful, approachable and and and highly active and talented. The organisation had open-hours community sessions for everyone to join. Everyone was encouraged to share their ideas which will help in betterment. We had weekly check-ins where we are supposed to tell about our weeks goals. They are enthusiastic and friendly org tbh.

Public Lab witnessed more than 400 new contributors in it's one project

PS : The environment is freaking awesome.

My contribution for Outreachy with Public Lab

After I selected the project I wanted to contribute. I setup the project locally. The local setup took bit of time for me. As it was in ruby on rails and I've never used one before.

So, I started contributing with Issues based on UI. I tried to work on different sections of project to understand it better. I opened Issues, did fixes. And while on last day of submitting my final application, I listed down my contributions. After submitting the final application, I didn't stop but instead keep on contributing.

Now here I'm, working on the project UI Improvements of Public Lab under the program called Outreachy.

Thanks for giving it a read ❤

I shared it on Medium.

https://twitter.com/DugtalLekhika/status/1133401881949159424

You may visit and give it a clap.

Follow related tags:

blog soc summer-of-code outreachy

Public Lab's contribution framework (deep dive 2019)

7 Months

I recently reached my 7th month as a contributor to Public Lab's open source software, a milestone that, amongst other things, brought to my attention that it's now or never to write a blog post.

In the spirit of writing about something that really does deserve a full 7 months of consideration, and still keeps me thinking, I want to shine light on Public Lab's contribution framework.

Open source communities are fascinating to think about because they come in all shapes and sizes - most successful communities share certain commonalities (ie. often called "best practices"), but underneath that their foundations are a testament to achieving a specific ethos and set of goals.

Thinking about Public Lab's contribution framework, founded on 3 simple steps, has allowed me to shape my understanding of the all-encompassing open source community, and what makes Public Lab's sub-community so special.

FTOs

My first PR ever was Oct. 16th, 2018, where I completed what is known in some beginner-friendly OSS communities as an "FTO" (First Timers Only) issue. The labels we use for these, which you might find across Github, are "first-timers-only" and "good first issue". To this day, I have opened 21 of these issues myself.

FTO issues are the glue that holds PL's framework together, and teach us invaluable lessons about open source community and culture. (More on this later).

Workflow

PL follows a 3 step process for initiating new members:

- A new contributor completes a guided "FTO" issue

- Then they complete any regular issue, often labeled "help wanted"

- Lastly, they open their own FTO and help guide a new contributor through it

these 3 steps are incredibly well thought out.

Primer - Openness

One of the most admirable and celebrated aspects of open source is the various acts of kindness amongst contributors in a community. Open source communities make learning an inherently social process, so contributors share an interest in mutual aid and interaction.

Although socialization is inherent, communities still bear the responsibility to facilitate an optimal environment for it. The foundation a community lays down for workflow and culture shapes contributors' interactions.

Public Lab's approach works on the principle of openness. Openness as a paradigm of organization:

This: Openness is an overarching concept or philosophy that is characterized by an emphasis on transparency and free, unrestricted access to knowledge and information, as well as collaborative or cooperative management and decision-making rather than a central authority.

![]() More at Wikipedia

More at Wikipedia

Includes this (open source software): 4. computing degree of accessibility to view, use, and modify computer code in a shared environment with legal rights generally held in common and preventing proprietary restrictions on the right of others to continue viewing, using, modifying and sharing that code.

But also openness as a community mantra (ie. receptiveness, kindness, patience).

As the landscape of open source software changes rapidly, with more users joining Github in 2018 than in its first 6 years combined, some communities lead the way in developing a new collaborative model for this uncharted territory.

PL's workflow is exemplary of how this is done. Its approach is multi-faceted, but the focus here is on its ability to cleverly weave community values into its foundational workflow, which carries the most palpable benefits.

Its workflow is a cycle of reciprocity, in which every contributor experiences both ends. From a contributor's first contribution, an air of gratitude starts spreading which they'll carry on.

This is summarized well by the wise words of Google's Summer of Code mentor guide:

"Consider treating every patch like it is a gift. Being grateful is good for both the giver and the receiver, and invigorates the cycle of virtuous giving"

This cycle starts with step 1.

Step 1

provides the opportunity to learn about PL's community culture firsthand while working through an FTO guided by another contributor. This can often be a contributor's first pull request ever, which is one of the toughest milestones in contributing to OSS. Two notable ways PL empowers contributors through its culture of kindness and gratitude:

- Welcome bot: each new contributor receives a congratulatory message on their first PR:

- Every FTO receives feedback from reviewers or maintainers. Commenting something as small as "thank you" makes an impact. Surprisingly, this is not necessarily standard etiquette across open source communities.

A quick anecdote: featuring a community that suffers from the absence of any structured empowerment system

Recently, I stumbled upon some incorrectly translated Russian-language configuration files for a platform-specific i18n community. I opened two PRs. Shortly after, they were merged without any communication exchanged besides these notifications:

It's not that I wanted a gold star. My main problem with this was that the process felt like a side conversation with myself rather than a community contribution, which is not a sought after experience in OS.

Communication is an axiomatic part of a community; this is not a revelation. Any communication is good (a merged pull request is a whole lot better than nothing), but not all communication is the same.

Despite having about 400 contributors and 3,500 stars, the repository still has a number of translation inaccuracies that a single, motivated contributor could fix. It is not meeting its full potential because its large contributor pool is not, well, contributing much after a few initial PRs.

Side note: full respect to this community and what they have accomplished. This anecdote only serves to point out the opportunity cost of expending less resources on contributor engagement, and that contributor participation is not something that's guaranteed to a community.

Step 2

provides the opportunity to rinse and repeat the Git workflow learned from the FTO, with the added challenge and opportunity to create and implement self-directed code improvements.

Step 3

creating your own FTO, provides the opportunity to give back to the community - specifically by guiding new contributors through the same process you just worked through recently.

The result is a community in which the contributors are passionate about helping out: see the free response results of PL's contributor survey.

It also creates an atmosphere of mutual support in which contributors experience being both the supported and the supporter. The implications behind this are important. Contributors that make it to the supporter side and open an FTO typically share a common understanding - that every contributor here is challenging themselves to take on new things and grow, regardless of experience level. The prospect of airing one's work in public feels less intimidating within the community. It is completely reframed as a means of connecting to a community, and materializes one of the key benefits of open source: shared knowledge.

Sustainability

PL's community expansion has largely been self-sustainable so far.

As each new member is expected to add at the very least one new FTO, the community grows exponentially.

As cited in PL's 2019 community report:

"we have grown over 400% in the past year to approximately 500 contributors over the total lifetime of the project."

More importantly, contributors proceed to take on leadership roles, such as joining the "reviewers" team, at a pace that reasonably matches growth. A system of distributed responsibility reminiscent of how a blockchain is governed pans out.

Setting a clear path for contributors to be able to advance to the role of a "reviewer" is an important aspect of openness that leads to sustainability, as it creates an incentive for contributors to become more deeply involved in the community. In PL, this path is the completion of the workflow.

These same contributors always go the extra mile. Off the top of my head, projects spearheaded by contributors in the last few months include: a weekly check-in system (implemented by myself and refined by @bsugar), revamping the community contributor page, updating countless documentation to clarify information for newcomers, establishing a monthly open hour call, and most recently - a system for tracking FTOs (by @gauravano, appointed community coordinator).

The benefit of self-sustainability is critical to understanding that the trend towards openness has benefits previously unreachable with an old leadership model (What comes to mind is a repo advertising "New maintainer wanted" on Github after the old network is exhausted):

Open Source in 2019

PL's community growth aligns with a general growth trend in open source. Consider the 2018 Github stats, such as that 1/3 of all pull requests ever were created in 2018.

As more new contributors look for a fit in an open source community on Github than ever before, openness is a useful mantra to follow in 2019 if an organization seeks to inspire new contributors, scale (relatively) organically, and has the flexibility to restructure.

To ensure the consistent application of a community's desired open source practices, this framework for open governance needs to be built on a tailored and well thought out foundation. Public Lab's simple workflow and emphasis on a positive, supportive culture is a great example of how one community approaches structuring their foundation, managing contributor growth and ethos.

Follow related tags:

software blog code barnstar:basic

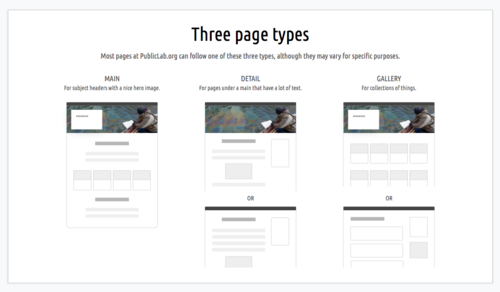

Introducing a (draft) Style Guide for Public Lab

For a long time, folks making new pages and interfaces at PublicLab.org have not had much (if any) guidance or direction, and, understandably, have brought their own ideas to the project. This is great initiative, but we could do a better job setting some clear design conventions, and the whole site would benefit from some more consistency.

@sylvan and I have been working on a Style Guide to serve this purpose. This guide is designed to support coders, designers, and writers building and designing pages on PublicLab.org.

We're at a point where we could use some input and feedback, so here's a draft!

Our goals include:

Simpler and more consistent design

- Easier to understand and use: clear and well-explained guidance for design will make it easier to start doing UI work at Public Lab, and easier for people using PublicLab.org to use.

- Less customization: using standard libraries like Bootstrap 4 (http://getbootstrap.com) and less custom code will make it less fragile, more compatible and accessible, and easier to upgrade. We strongly encourage using widely familiar interface design conventions, so people don't to have to "learn how to use PublicLab.org."

- Easier to maintain: with a set of standards, it will be clearer what UI /should/ look like, and less likely that it will diverge and become inconsistent or messy. Less code will be easier to maintain at a high level of quality in the long term.

- More support and guidance for people designing new pages/interfaces

Increased stability

- Better organized UI code: cleaning up our code, reducing redundancy, and standardizing (and re-using) templates will make it easier for everyone to do good UI design overall.

- Better UI tests: our new System Tests enable testing of complex client/server interactions exactly like a user will experience them. We aim for high coverage: https://github.com/publiclab/plots2/issues/5316

- Fewer UI breakages: all of this should contribute to fewer bugs system-wide.

This guide won't cover every situation, but will establish an overall approach to UI design that all our work should build on cohesively.

Check out the draft style guide here -- comments and input are very welcome!

We'll be adding more and more annotations as we go, so that it's clear /why/ we've made these recommendations, and how to apply them.

We'll also be following up in a later version with code samples and links to templates!

Update

For developing more complex mockups and prototypes, this may be a great tool!

Follow related tags:

website design blog code

A big WELCOME to our Outreachy/GSoC team for 2019!

Hi, all! I wanted to reach out because we will have a record-size team this summer, between 2 Outreachy and 13 GSoC fellows --- we've never had a group this big! We also have a bigger mentor group, but we will certainly need students to help one another. I wanted to reach out and welcome everyone, but also set the tone for the kind of mutual support we will need to succeed together.

Here are the announcements - congratulations!!!:

https://summerofcode.withgoogle.com/organizations/5544218626359296/

https://www.outreachy.org/alums/

I was thinking of asking people individually to keep an eye on someone else's work even as they do their own. Each person might have a set of skills they could use to help another with -- whether it's writing tests, making FTOs, solving database issues, designing UIs, or creating clear and simple documentation. We'll need to rely on each other --- students helping students above all --- to pull this off and ensure everyone has a great summer, learns a lot, and finds success!

A note to those who we weren't able to accept in this year's program: we had over 20 fantastic proposals, and if we had the slots available and the mentoring team, we would surely have accepted more. Please know that we are extremely grateful for your work with us to date and that we welcome you to continue being part of this community. We considered many factors, among which are the prioritization of different projects, our ability to support different kinds of projects with good mentorship, and much more. So our inability to accept you should not be taken in a discouraging way, or as a suggestion that your proposal and your skills were not impressive and exciting on their own merits. Thank you for understanding and we hope you'll apply again ❤️

Projects

Follow related tags:

gsoc blog code soc

Public Lab job posting: Business and Operations Manager

Start Date: Mid June 2019

Location: New Orleans, LA

Terms: Full time

The Public Laboratory for Open Technology and Science (Public Lab) is a community-- supported by a 501(c)(3) non-profit--that develops and applies open-source tools to environmental exploration and investigation. By democratizing inexpensive and accessible Do-It-Yourself techniques, Public Lab creates a collaborative network of practitioners who actively re-imagine the human relationship with the environment.

This position is based in New Orleans and will be responsible for managing the business and operations of the Public Lab nonprofit. Day-to-day tasks include bookkeeping and financial management, supporting human resources and keeping a multi-office, remote team organized through meeting coordination and internal staff communications. The goal of this position is to improve and maintain efficiency and productivity of the organization, and to oversee the management and coordination of fiscal activities for Public Lab. This position reports directly to the Executive Director.

Major Position Responsibilities

Financial

- Working with our outside accountant to manage the day-to-day bookkeeping processes at Public Lab including monthly closes, invoicing, reimbursements, and donation and sales processing.

- Oversee all financial reporting activities for the organization, such as: running balance sheet and profit and loss reports, reporting on organizational and grant budgets and compiling financial reports.

- Maintain processes to provide timely financial and operational information to the Executive Director, staff and Board of Directors.

- Support the development of Public Lab's annual budget and program budgets.

- Manage the financial relationship with an organization Public Lab fiscally sponsors, including reporting and fund disbursement.

- Serve as a liaison to our CPA firm and manage the yearly audit process.

- Support project managers in increasing their capacity for management of grant budgets.

- Ensure compliance with rules from granting organizations.

- Identify and communicate changes that could increase the efficiency of financial workflows to support stronger reporting practices and organizational growth.

Human Resources

- Maintain and administer the relationship with Public Lab's third-party payroll and benefits provider.

- Manage internal staff requests related to HR including time keeping and requests for time away.

- Facilitate staff trainings, reviews, onboarding and departure from the organization.

- Manage and update the employee handbook, staff policy and procedure documents and employee files.

- Manage the hiring and training processes for new staff members.

- Draft and coordinate the completion of contracts and MOUs with partners, contractors and fellows.

Operations

- Support activities related to monthly and quarterly meetings with the Board of Directors and coordinate logistics for staff meetings and retreats.

- In coordination with our payroll company and CPA firm, ensure compliance with reporting requirements and tax filings.

- Maintain organization insurance policies and update as necessary.

- Support Public Lab program staff in event logistics and scheduling.

All Public Lab staff are expected to adhere to the Public Lab policies, procedures, values and community-wide Code of Conduct.

Education/Training

A Bachelor's degree or equivalent in finance, business or other related field is preferred and 3-5 years of demonstrated job experience in a related field is required.

Additional Preferred Qualifications:

- Experience at organizations with budgets around $1 million.

- Knowledge of nonprofit accounting including grant tracking and reporting.

- Experience with organization finances that include multiple funding sources (grants, memberships, earned revenue) for different organizational programs.

Skills, Knowledge, and Characteristics Required

- Expertise in using financial software including QuickBooks (online edition) for nonprofits, Expensify and Bill.com.

- Proficiency in using a variety of collaborative software such as Slack, Google Docs, and Dropbox.

- Experience using CRM databases such as Salesforce.

- Excellent attention to detail and organizational skills.

- The ability to balance multiple competing tasks and requests and enjoyment from working efficiently towards goals and deadlines.

- A desire for and fulfillment from working on a team, but also an ability to work independently on job tasks.

- Incredible interpersonal skills, demonstrating great communication, kindness, respect, and patience within our collaborative work environment.

- An enjoyment of problem solving and the ability to put this to use in areas where organizational operations could be improved.

- Willingness to work remotely with colleagues, including your manager.

Compensation is between $40,000-$50,000, based on experience and qualifications. Public Lab offers a benefits package that includes four weeks starting vacation time in addition to federal holidays and personal days, 75% coverage of health benefits, a 401k and paid sabbaticals. This job requires occasional travel and work outside regular business hours.

Please send a single document containing a cover letter and resume to jobs@publiclab.org by May 19, 2019. No phone calls please.

Public Lab is an equal opportunity employer committed to a diverse, multicultural work environment. We encourage people with different ability sets, people of color, and people of diverse sexual orientations, gender expressions and identities to apply.

Follow related tags:

nonprofit blog jobs