About me

Name : Forcha Pearl Fri

Personal Email : forchapearl1@gmail.com

Github : https://github.com/Forchapeatl

Gitter Handle : forchapeatl

Affiliation University of Buea, Faculty of Engineering and Technology.

Location: Buea, Cameroon

Timezone: West African Time (UTC+1)

Project description

This project aims at improving the User Interface at Infragram.org by fixing some known bugs and implementing various features like cross-browser drag-and-drop, Full Screen mode enhancement, Video NDVI analysis, and some UI improvements.

Abstract/summary (<20 words):

Problem

What problem(s) does your project solve?

After doing some research in the current Infragram UI I concluded some of the following problems.

- No option to close WebCam input: There is no available option to turn off the Infragram Web Camera. This overloads the internal buffer memory and may cause a time lag between reading and releasing the input feed.

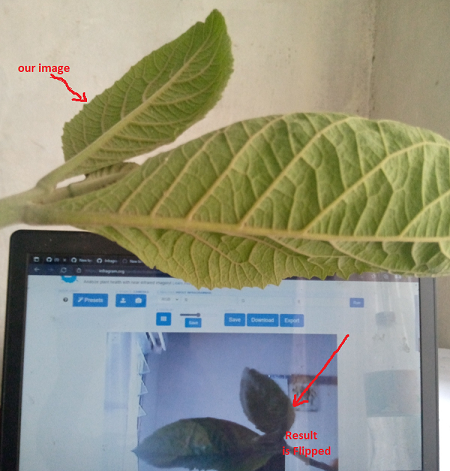

- Front Camera does not mirror: _ The video stream of the front camera is not filpped , this causes a lot of confusion as most users are versed with the mirror feel of a front camera.

- No image/video file filters on Infragram's file input: This leads user uncertainty to the functionality of the infrared analysis.

- The full screen icon is made invisible whenever an image is set for analysis. This makes it difficult to identify the button required for full screen mode.

- No functionality is provided to exit the Infragram full screen display. This makes the interface suboptimal and reduces user engagement.

- Absence of images to help people chose the right filter to assist users in choosing the right image on the presets modal. In regards to this Issue #226

Project Goals**

1. Implement Cross-browser drag-and-drop

infragram/src/file-upload.js

- Define the drop zone (The target element for the file drop): I will include the HTML "ondrop" and "ondragover" attributes to the desired element.

- Use Boostrap CSS library to style our dropdown element.

- Define JavaScript events to handle file the dragover and file drop processes.

- Define events to pass the dropped file to our infragram canvas for Analysis.

2. Implement a new interface (using Bootstrap 4) which is full screen with a toolbar, solicit and incorporate user input.

- Install Boostrap and Uninstall Boostrap 3

infragram/package.json

- Identify parent element containing the toolbar and the canvas.

- Replace full screen icon transparent background with color (solution to problem 4).

- Check which FullScreen method is available on browser.

- Create a function to enter and exit fullscreen mode when ever requested.

- Assign the FullScreen mode function to the identified parent div Element.

infragram/src/fullscreen.js

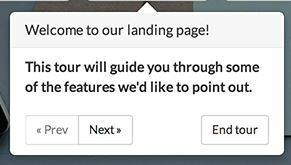

3. Add Welcome modal and tooltip tutorial with Bootstrap tour API

infragram/index.html

- Identify all HTML elements necessary for the walk through guide.

- Write messages describing the functionality of each element.

- Set up Bootstrap tour steps with identified HTML elements and their corresponding messages.

3.1. Transfer Q&A Section to help menu

4. Integrate new cross-platform WebRTC latest camera selection API from getUserMedia.

infragram/src/io/camera.js

- Check for mediaDevices API Device Support.

- Request permission to make use of the media input devices.

- Let the user choose from their available list of video devices in HTML option tag by calling the enumerateDevices method of WebRTC.

- Display the Video Stream on the Browser.

- Flip video stream alignment to mirror object when front camera is selected.(Solution to case 2).

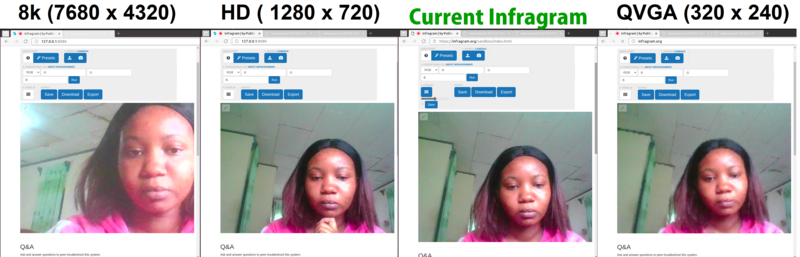

5. Accept multiple video resolutions

.

infragram/src/io/camera.js

- Specify video heights and Widths on MediaStream Constraints.

- Implement Boostrap style on HTML select tag to handle objects returned from MeadiaStream enumerate device method.

6. Perform Video analysis on browser.

- Create hidden video element on Infragram page.

- Allow file input type to accept only image and video files (Solution to problem 3)

- Load the dropped video file into our HTML video element.

- Match the dropped video to fit the size infragram Canvas element.

- Create and Design Play and Pause buttons to overlay on infragram Canvas.

- Attach Designed buttons to play and pause events of our video element.

- Hide and display play / pause upon selection of image or video file.

7. Allow conversion of the whole video (WebGL video manipulation).

infragram/src/processors/javascript.js

- Allow conversion of the whole video.

- Grab hold of the video and canvas elements.

- Grab the canvas's webgl-context so I can draw on it.**

- Attach draw function to the "play" event on the video element.

- Draw the current frame of the video directly onto the infragram canvas.

- Capture canvas each animation and store in a chunk array.

- Construct a blob from our array when recording stops ( video ends ).

- Create second a video element.

- Create URL from second video element and pass to the source of our second vide element.

**

8. select multiple output resolutions.

9. Write UI tests for new Infragram UI, using Jest.****

- Install Jest using yarn

- Create test.js files with respect to all infragram UI modules.

- Include test script in package.json file.

- run yarn test on previously created test files.

10. Refine Infragram presets modal.

11. Allow zooming/panning of video within canvas

- Define mouse and touch events required for Zooming/panning`

- Grab the current context of Canvas element.

- Set Context.scale to enable zooming on Canvas.

- Set Context.translate to create our panning effect...

Timeline/milestones **

Pre-GSoC Period - Till 20th May

- Understanding the idea and getting doubts resolved as soon as possible.

- Going through already available code, understanding its working.

- Solving already opened issues related to the project.

- Writing some tests to head start.

Community Bonding (20 May, 2022 - 12 June, 2022)

- Get familiar with the community and attend Public Lab open calls to get an idea what needs to be done.

- Discuss Project with mentors and brainstorm some ideas which could have multiple approaches.

Week 1 (12 June, 2022 - 19 June, 2022 )

- Start working on cross-browser drag-and-drop on the entire page

- Make corresponding UI changes.

- Write Tests for the changes made.

- Take feedback from mentors and improve.

Week 2 (19 June, 2022 - 25 June, 2022)

- Work on implementing a new interface (using Bootstrap 4) for Infragram.org which is full screen.

- Make refinements to the toolbar, solicit and incorporate input from user community.

- Communicate with Outreachy participant.

- Make corresponding UI changes.

- Write Tests for the changes made.

- Take feedback from mentors and improve.

Week 3 (25 June, 2022 - 30 June, 2022)

- Work on transferring Q&A feature into a Help menu

- Communicate with Outreachy participant.

- Make corresponding UI changes.

- Write Tests for the changes made.

- Take feedback from mentors and improve.

- Attend PubicLab open call for feedback from the user community.

Week 4 (30 June, 2022 - 6 July, 2022)

- Work on welcome modal and tooltip tutorial with Bootstrap tour.

- Communicate with Outreachy participant.

- Make corresponding UI changes.

- Create FTO's whenever possible

- Write Tests for the changes made.

- Take feedback from mentors and improve.

Week 5 (6 July, 2022 - 13 July, 2022)

- Work on new cross-platform WebRTC video library for Safari iOS support

- Make corresponding UI changes.

- Write Tests for the changes made.

- Take feedback from mentors and improve.

Week 6 (13 July, 2022 - 20 July, 2022)

- Work on accepting multiple resolutions of video.

- Make corresponding UI changes.

- Write Tests for the changes made.

- Take feedback from mentors and improve.

Week 7 (20 July, 2022 - 25 July, 2022)

- Implement loop, pause, play and sleek bar on canvas video (processing dropped video locally).

- Make corresponding UI changes.

- Write Tests for the changes made.

- Take feedback from mentors and improve.

Week 8 (26 July, 2022 - 3 August, 2022)

- Work on converting the whole video by recording from the canvas.

- Make corresponding UI changes.

- Write Tests for the changes made.

- Take feedback from mentors and improve.

Week 9 (3 August 2022 - 8 August, 2022)

- Work on UI tests for new Infragram UI.

- Create FTO's for newcomers.

- Write/modify documentations for the change made during internship.

See this page for guidance on breaking your plan up into small, self-contained parts: https://publiclab.org/notes/warren/01-18-2018/software-outreach-modularity-is-great-for-collaboration

Needs

I will need guidance from my counselors. suggestions or feedback from all members of the PublicLab will be positive and will help me build, complete my project and contribute to the community.

Public Lab contributions

- Comments, to show overall community involvement (like helping others): Search * commenter:Forchapeatl org:publiclab (github.com)

- Open issues: Issues * publiclab/plots2 (github.com)

- Closed PRs: Pull requests * publiclab/plots2 (github.com)

- Open PRs: [open PRs Public lab] (https://github.com/publiclab/plots2/pulls?q=is%3Apr+author%3AForchapeatl+sort%3Acreated-asc+is%3Aopen)

Experience

I am currently a 6th-year student at University of Buea, Faculty of Engineering and Technology, Cameroon, and have been doing web development right from the second year of my college. I have worked on projects based on JavaScript, React, and Ruby.

Teamwork

Well Regarding Public Lab, I have gained a lot of experience and guidance from every member of the PublicLab especially @mathildaudufo and Working in Public Lab has been a great journey for me till now and I hope the same for the future as well.

Passion

My friends and I are currently working on a water sanitary evaluation project. Infragram made us realize that the using infrared to calculate the NDVI of the vegetation surrounding each water source, we would have an estimate to the degree of pollution to that water source . With knowledge of of how well the vegetation appears, we can mark a water source as pure or harmful. Not only has this project brought us insight , it has also improved my heath as I now only consume vegetables based on their Infragram analysis.

Audience

This project will ease the process of researchers and curious people alike who are involved with using Infragram as a platform to analyse their plant health to draw some meaningful insights out of their analysis. Furthermore, It will be an honor to implement added features which will make the Infragram experience more enjoyable and intuitive for all that use it.

Commitment

I don't have particular conflicting schedule in the period of GSoC and I can work full-time.

7 Comments

Hi @forcha, I really like that you outlined the problems you got in your research and included/linked their solutions in your project goals 🎉 . I like the mock ups and gifs they give a great flow of what you have in mind. Your milestone is well detailed, I like that you included communication with the Outreachy intern each week too. I have a few suggestions, I would like it if you could mention any libraries if any that you will be working with, you could also add any code snippets(existing or new ) where the changes will be and maybe link us to which files would be involved in some of the changes. Thanks.

unrelated I really like the banana image 😃

Thanks for posting!

@cess Thank you for the feedback, I have update the proposal with existing code snippets where changes will be implemented. Could you please review it again once? Thank you!!

Is this a question? Click here to post it to the Questions page.

Reply to this comment...

Log in to comment

Hi @forcha this is a great proposal, thank you so much. Some feedback:

For this, we have linked in the issue to the Temasys library, which helped us to implement more of the modern Webcam API features in the Spectral Workbench library: https://github.com/publiclab/spectral-workbench.js/pull/172 & https://github.com/publiclab/spectral-workbench.js/issues/87

Could you look a bit at that implementation, which enabled things like cross-platform webcam access and resolutions, and I believe did not require manually writing out the resolution options as you've shown? I believe it was possible in that library to query the available resolutions the camera provides and to offer those.

What's more, I believe there may be a need to change the pixel dimensions of the canvas element AND the canvas context, in order to preserve the image size once we've fetched a higher resolution image from the camera. Can you try linking to the areas of the code where such changes would need to be made, or outline the questions you'd have to make such plans?

Finally, I suggest developing the full-screen version in a second index2.html file, or in a folder /v2/index.html, so that we can deploy it for testing even before it's fully ready to replace the original interface. That way you can roll out improvements incrementally and your first published version doesn't have to completely meet all the features of the previous interface. I hope that makes sense!

Thanks a lot, again, i appreciate your proposal's design work, attention to details, etc!

Is this a question? Click here to post it to the Questions page.

@warren Thank you for your resourceful feedback and references. This solution will be in three steps.

1.Replace existing

getUserMedialibrary inclusions withTemasys/AdapterJSin the infragram/(vr/pi)index(2).html and infragram/package.json files. Every instance of the code belowif (webRtcOptions.context === "webrtc") {...}will be replaced by

if (webRtcDetectedType === 'plugin') {...}in the files infragram\src\io\camera.js and infragram\dist\infragram.js.

As

Temasysis a WebRTC plugin with IE and Safari support we will no longer need code below on our infragram\dist\infragram.js file.if (webRtcOptions.context === "flash") {...}2.Accepting Multiple video resolutions. We will adopt the same implementation of camera resolution selection and update from Spectralworkbench where default height and with constraints are set for initial use and updated whenever desired by attaching an onchange event to our HTML select option.

3.Preserving image size upon Camera resolution update. From what I understood the canvas size is constant while Webcam video(hidden) changes. An approach would be to downsample each video frame(image) to fit canvas size while disabling interpolation. To downsample an image, we need to turn each square of p * p pixels in the original image into a single pixel in the destination image. As discussed here https://stackoverflow.com/a/19223362

The second approach would be to scale down the our webgl texture as discussed here https://stackoverflow.com/a/19262385 With a resolution video of 1280 width by 960 height , a 640 width by 480 canvas size. we would have to scale down our canvas context by half.

@warren , I plead that to give me 48 hours to come up with a working implementation using the second approach. It 's taking a while for to comprehend WebGL. Please I will post the implemented code snippet on the Friday 22nd April 2022. Please , I just need some time with WebGL. Thank you.

Hello @warren please have a look at the code to the proposed changes https://github.com/Forchapeatl/infragram/pull/1/commits

infragram/src/dist/infragram.js

pScale = gl.getUniformLocation(ctx.shaderProgram, "uScale"); gl.uniform2fv(pScale,scale);infragram/index.html

**HD Resolution** <canvas class="fullscreen" id="image" width="4600" height="3500"></canvas> **QVGA** <canvas class="fullscreen" id="image" width="320" height="240"></canvas>Problem is higher (8K) resolutions enlarge the webcam texture hence utilizing a lot of GPU resources. It may hinder performance on some devices. I'm currently brainstorming for an optimal solution.

Is this a question? Click here to post it to the Questions page.

@warren , please am I moving in the right direction here ?

Is this a question? Click here to post it to the Questions page.

Hello and thank you! We are going to prioritize Outreachy feedback and reviewing as the deadline for our selections is due for that program earlier (May 4). So I'll get back to you on this as soon as we get through those, thanks for your patience!

Reply to this comment...

Log in to comment

Login to comment.